As artificial intelligence (AI) writing tools like ChatGPT become more advanced, many educators are concerned about students using these tools to generate content for assignments. In response, the academic integrity company Turnitin has developed new AI writing detection capabilities. But how exactly does the system work, and what should students and teachers know about it?

In this blog post, we'll provide an overview of Turnitin's AI detector, including details on how it functions and what the results mean. The goal of this article is to promote understanding between students and teachers around this emerging technology, specific to academia.

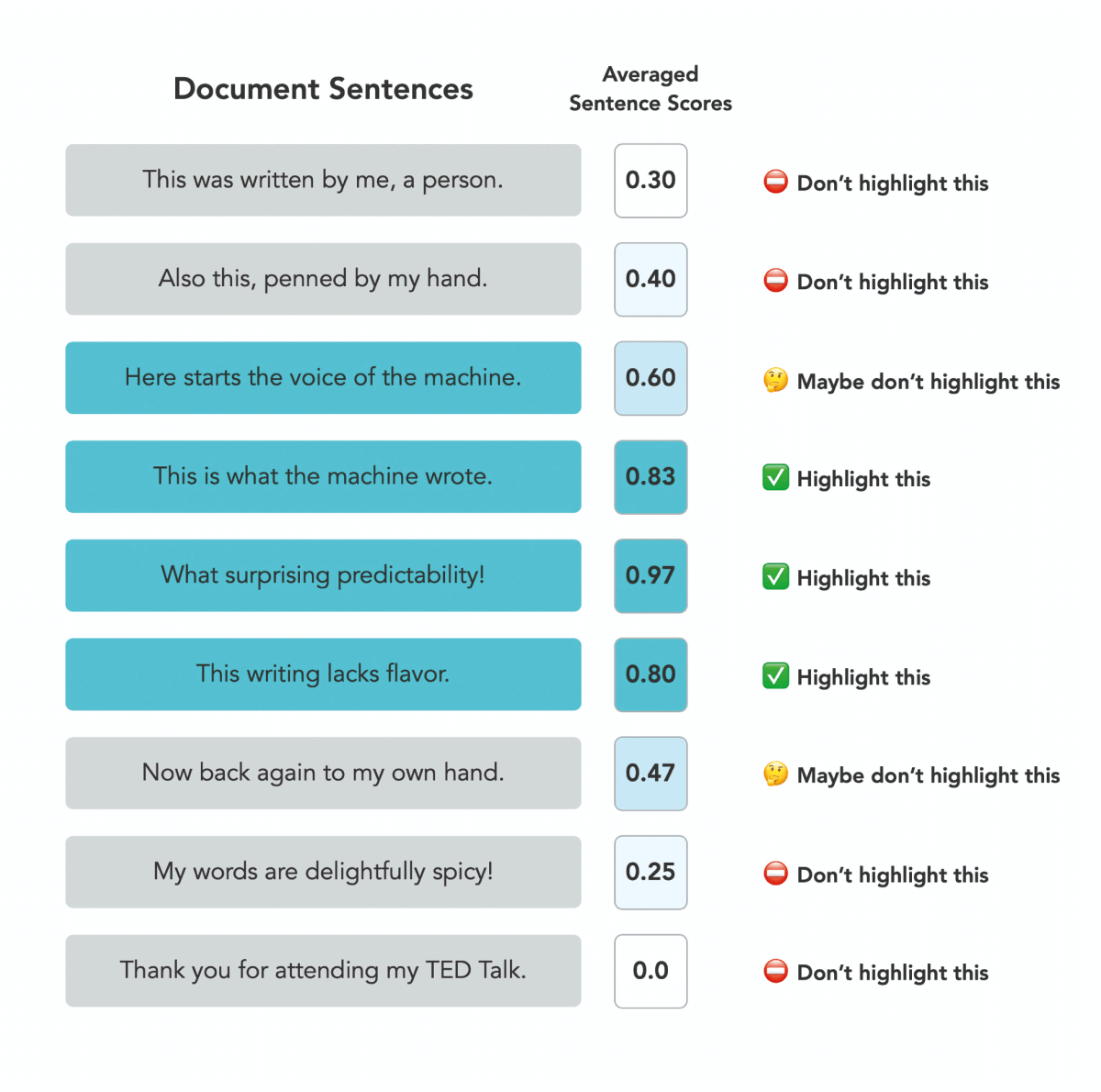

According to Turnitin's FAQ, the AI detector breaks submissions into text segments of "roughly a few hundred words" and analyzes each one using a machine learning model. Specifically, the FAQ states:

"The segments are run against our AI detection model and we give each sentence a score between 0 and 1 to determine whether it is written by a human or by AI. If our model determines that a sentence was not generated by AI, it will receive a score of 0. If it determines the entirety of the sentence was generated by AI it will receive a score of 1."

The model generates an overall AI percentage for the document based on the scores of all the segments. Currently, it is trained to detect content generated by models like GPT-3 and ChatGPT.

It's important to understand what the AI percentage actually means. As the FAQ explains:

"The percentage indicates the amount of qualifying text within the submission that Turnitin’s AI writing detection model determines was generated by AI. This qualifying text includes only prose sentences, meaning that we only analyze blocks of text that are written in standard grammatical sentences and do not include other types of writing such as lists, bullet points, or other non-sentence structures."

So the percentage reflects predicted AI writing in prose sentences, not necessarily the full submission. Short pieces under 300 words won't receive a percentage.

Turnitin aims to maximize detection accuracy while keeping false positives under 1%. But there's still a chance of incorrectly flagging human writing as AI-generated. The FAQ states:

"In order to maintain this low rate of 1% for false positives, there is a chance that we might miss 15% of AI written text in a document."

For documents with less than 20% AI detected, results are considered less reliable. And for shorter pieces, sections of mixed human/AI writing could get labeled fully AI-generated.

It's important that teachers don't treat the AI percentage as definitive proof of misconduct. As Turnitin states, the tool is meant to provide data to inform academic integrity decisions, not make definitive judgements.

Students who feel they have been falsely accused can contest the results with their teacher and request a manual review. Turnitin's tool is still a work in progress, so feedback can help improve accuracy over time.

Moving forward, students and teachers will need to have open and honest conversations about AI writing. Rather than an adversarial dynamic, the goal should be upholding academic integrity while also advancing learning. With care on both sides, this emerging technology can be handled in a fair, transparent way.

*this blog post was written by AI with fact checking and edits from the HideMyAI staff and tool. This is how all content should be created in the AI era.