In this article we will be taking a look at the usage of AI in education and the schools and universities should be encouraging or policing it.

We will take a look from numerous perspectives to get the best understanding of this new concept in the academic arena. By taking a look at the pros and cons of using AI in an educational context, We can then understand the impacts, outcomes, and potential policies that can be implemented. Let's dive right in.

As more and more students begin using AI to draft essays, aid in research, and be more efficient overall, many institutions and instructors don't know how to react. Should they embrace this newfound technology and modify the way that they instruct students? Or should they reject this modern phenomenon, and punish students who are caught using AI in their work? From the surface level, both of these approaches hold water. However, let's take a closer look at how students are using AI, how it is impacting their work, and a comparison between AI enabled and non-AI outputs. From all this information, we can begin to answer the question of should students be punished for using AI?

First, let's review how students are using AI in their general day to day. The usage of the AI falls into three distinct categories. One is research, two is writing, and three is editing. Students will leverage conversational AI like ChatGPT, and the GPT LLM's to aid in the research process. They'll ask questions like when did this historical figure do this historical action? Or, how does this molecule interact with this other molecule? Detailed questions like these can be easily answered by the leading AIs of 2023.

They can also use this to write content for themselves. Instead of simply researching and finding facts which then must be verified, they could have the AI output entire paragraphs or even essays from individual prompts. Of course, this writing isn't incredibly personable, and will need deep fact checking to ensure that the so-called facts mentioned in the content are accurate and timely.

Students can also edit existing writing for clarity, grammar, and more. They can use tools like conversational AI, or more legacy offerings like Grammarly which recently added AI enabled features.

Compared to a pre-2021 world, when generative AI wasn't as accessible, students are now much more efficient with their work. The entire process of research and writing has been disrupted. But, is this disruption for the best or for the worst? Of course, that question is a bit subjective, but let's take a look at both viewpoints.

The positive viewpoint is now that students are able to be more efficient with the actual process of writing information, they can spend more time on the learning and synthesis of facts and information. Therefore, a student who uses AI may actually learn more about the content if they devote the same amount of time and effort into an assignment as somebody not using AI.

The negative viewpoint is that the student will simply use AI to regurgitate generic information and fax which may not be accurate due to hallucinations, submit the assignment, and go on with their day.

Taking a look at both of these viewpoints, both cases will likely shake out to be true. However, the students that use AI to quickly finish an assignment will likely be attempting the same thing even in a non-AI environment. A student who doesn't want to learn cannot be forced to learn. And, students to use AI to create entire papers are easily detected by leading AI detectors, unless they run their content through a humanization tool like HideMyAI. Even then, it's important that the student runs through the entire information and checks it for clarity, writing style, and of course information accuracy. All AI models are known to hallucinate. This is a phenomenon when the AI will output a piece of information that is seemingly accurate, and sounds correct. However, after deeper research, it becomes apparent that this is not a fact at all.

Therefore, if somebody simply generates an essay and submits it, without humanizing the AI output or fact checking the information within, they run the risk of being detected. However, if a student leverage is AI to write content, and then goes into a deep fact checking sequence, they may actually learn the information even better during the process of researching all of these so-called facts that the AI has output.

Now, let's take a look at the question of if a student should be punished for using AI in their output. In our opinion, absolutely not. Artificial intelligence is here to stay, and these tools are already being used in the professional workforce. If you are an educator banning the use of AI, you are putting your students at a disadvantage when they enter the professional world. After all, the entire purpose of education, specifically higher education, is to prepare a student for the real world.

By encouraging the responsible use of AI in education, you can teach your students how best to leverage these tools, giving them an edge in the professional world. By prioritizing the accuracy of AI generated content, you'll teach them the skills that are necessary to quickly fact check, synthesize information, and leverage AI for academic and personal use.

Artificial intelligence (AI) content generators are rapidly transforming how we produce written content. In the past few years, advanced language models like ChatGPT, Google's BARD, and Jasper have enabled anyone to generate high-quality text on demand for a wide range of purposes, sparking the desire to check if content contains AI-written text.

In this guide, we'll provide an overview of the leading AI content tools, discuss key indicators to identify AI-generated text, and review emerging attribution standards and detection methods. With AI poised to fundamentally disrupt content creation, it's crucial to understand how to validate if a piece of writing comes from humans, or is directly copy/pasted from an AI generator.

Using AI to kickstart writing is fine, so long as you put in the work to confirm stuff and polish it up. The aim of programs that detect AI isn't to call all computer-written stuff unethical. It's more about spotting AI content that someone was lazy and just copied without credit.

AI detectors help maintain honesty by telling apart stuff that was carefully put together from thoughtless copying. They aren't meant to punish using AI, but to encourage thoughtful use of computer-generated text. With some elbow grease and being upfront, AI material can boost human writing while keeping it real.

* this section was written with AI 😱, and edited by a human.

It's actually quite easy the tell if something was written by AI, especially if you read enough of the outputs that you know were written by it. In fact, LLMs (large language models, what all generative AI is based on in 2023) are essentially statistical machines! They identify the most likely next word or letter based on the patterns they've learned from a massive dataset of text.

For example, we've hire a lot for this company, and it's incredibly apparent when a cover letter is written by ChatGPT and directly copy/pasted without any edits. It's very impersonal and is a bad impression. When you're able to compare an AI created piece of writing with a human written one, especially if they have the same instructions (essay, cover letter, schoolwork, etc...) its VERY easy to tell that AI created it.

ChatGPT is a free AI content writer, which can be used as a rough AI content detector. For example, here's a prompt we use a lot:

Was this content written with Al or by ChatGPT? Consider the structure, wording, and general content before answering.

ChatGPT Prompt

From the example below, you can see that ChatGPT notes that it was "written in a way that could be written by AI".

However, this is a relatively imperfect method, as noted in the response itself. Also, the prompt is a bit leading and may get you a false positive. This is a worthwhile read discussing the limitations of OpenAI-derived content detection methods:

https://foundation.mozilla.org/en/blog/how-to-tell-chat-gpt-generated-text/

These detectors are only partially accurate, as we'll demonstrate in this section.

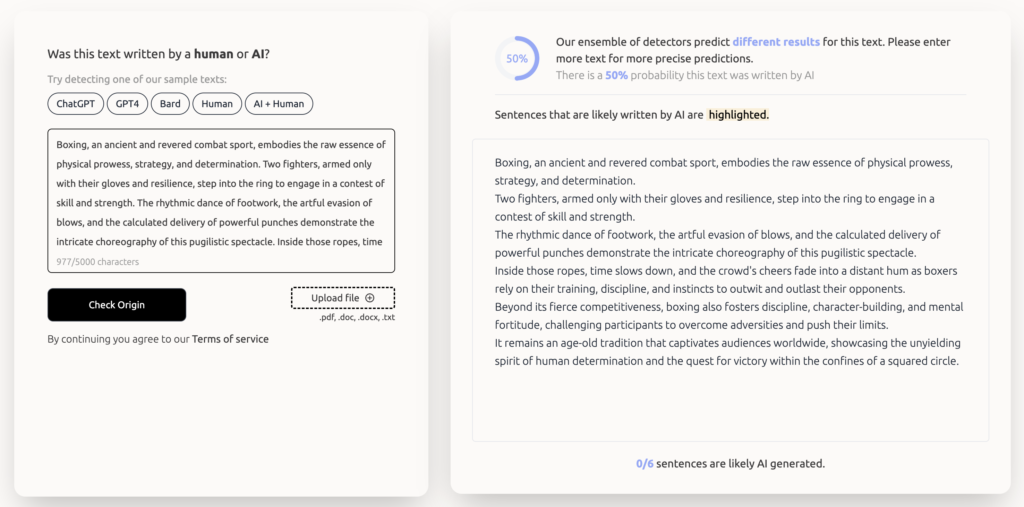

GPTZero is BY FAR the best AI content detector online today, mainly due to the fact that they raised $3 million from VC and actually run their own backend models.

It's not perfect - here you can see us inputing 100% AI content, but it only returns a 50% probability of being written by AI. However, it won't typically give you a false positive like many others will, meaning that it's one of the more accurate tools online.

The longer the content, the more accurate the output, and we've had great success in identifying AI content that is copied directly out of ChatGPT

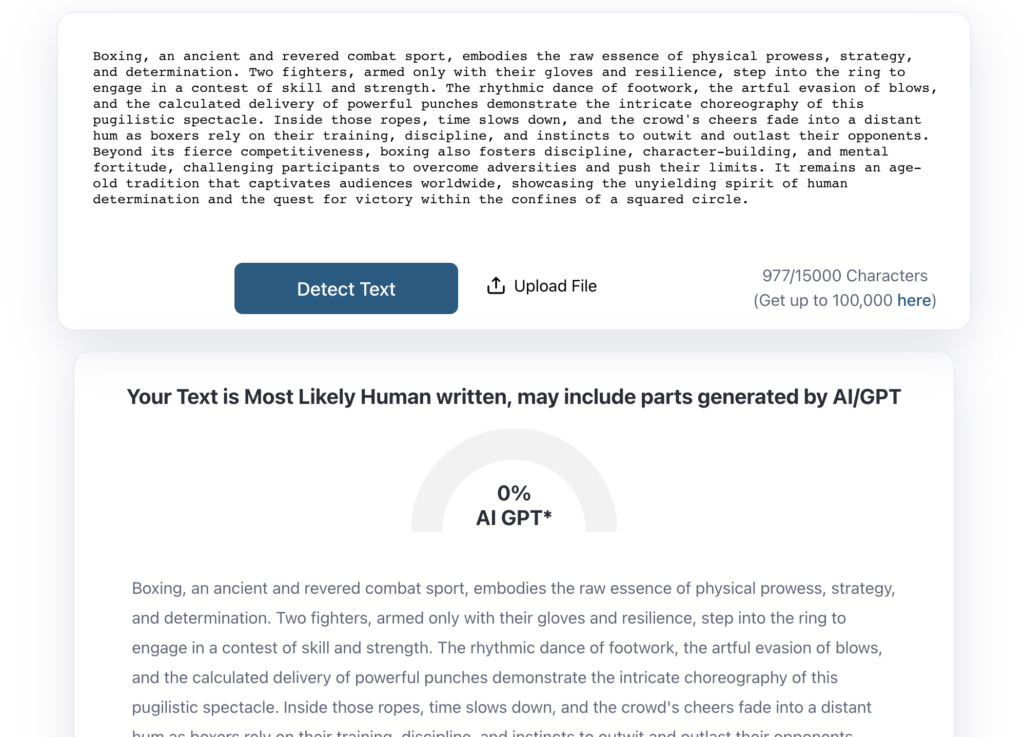

Here's another example of an AI detector, which isn't as great, but is 100% free and works sometimes. In this example, however, it's completely wrong. This is a great example of why you shouldn't rely on free AI Detectors, especially ones that use other companies APIs and aren't backed by investment and innovation.

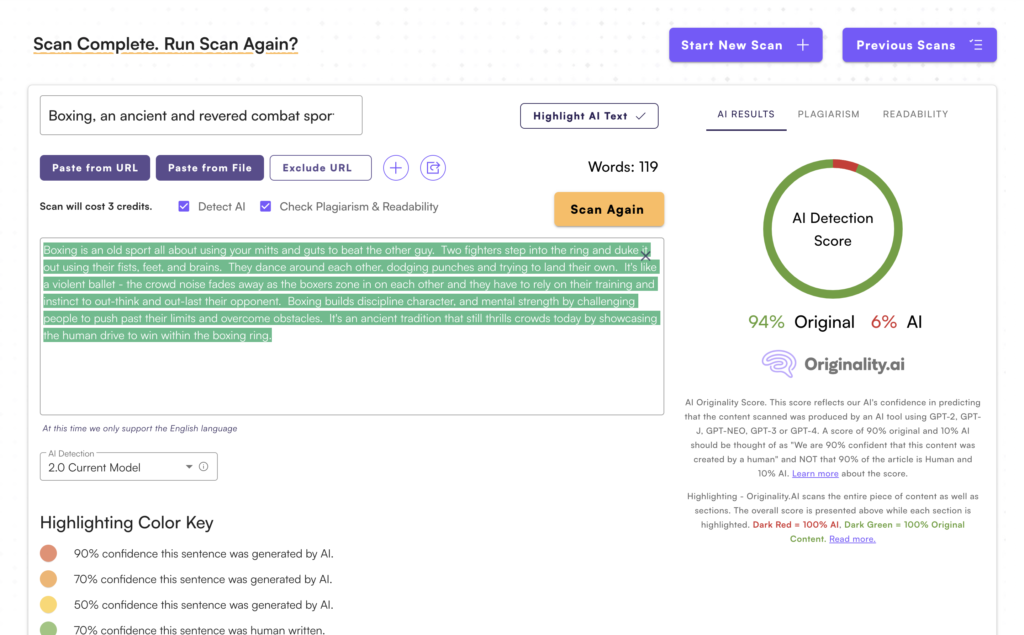

Paid AI detectors fare much better and can typically sniff out direct AI content with ease. Perhaps the best AI detector on the market is AI is called Originality AI which recently updated their detection to a 2.0 model, which works well.

The UI is simple and it will give you the probability that it's AI vs original content. You can also hover over specific sentences and paragraphs to get a more granular look into the AI content within writing.

Despite the accuracy, there are still some false positives, so be mindful of everything.

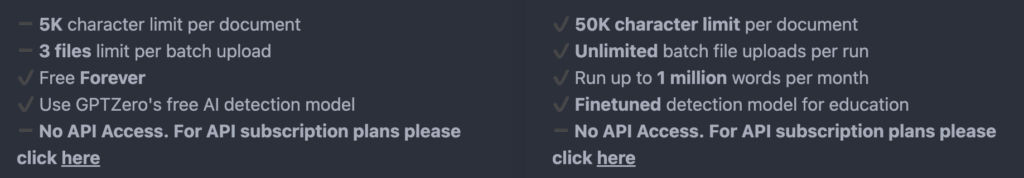

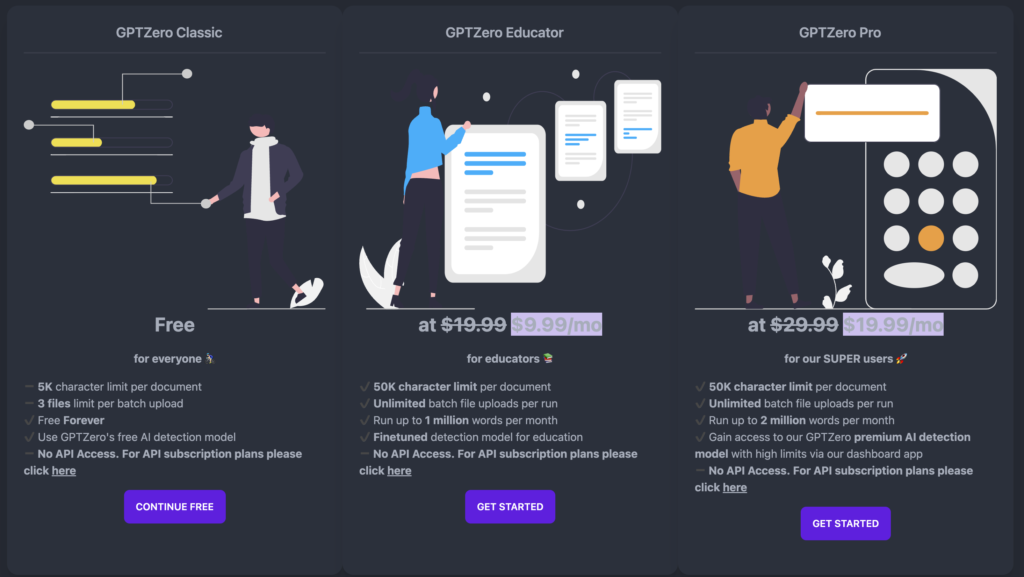

GPT Zero also has it's own paid plan, where there's a much higher character limit, batch file uploads, 1 million words per month, and a better finetuned model specific for use cases like Education or other things. There's also an API for developers.

Educators typically want to determine if AI was used to plagiarize somebody else's content. Most tools have plans specifically for this use case.

If your institution uses TurnItIn, they've released AI Writing and ChatGPT detection in beta to some users subscribed to specific plans. Many institutions get this for free so it's worth checkin out, but there's no public version of this tool.

This tool/detector can be bypassed as we have seen first hand.

GPTZero offers a discounted plan for educators, with a 50k character limit per document, the ability to upload multiple documents, and an even better detection model.

It's important to stress that AI content is the future, and educators should be learning to implement it in their teaching, not restricting it. By restricting it, you'll just put your students at a disadvantage once they enter the professional world, where AI is everywhere for good reason (save time and money).

Yes, all AI detectors can be beat. It's simple, just heavily edit AI generated content, adding your own information and knowledge to it, and it'll be relatively undetectable.

Or, even better, just write the content yourself!

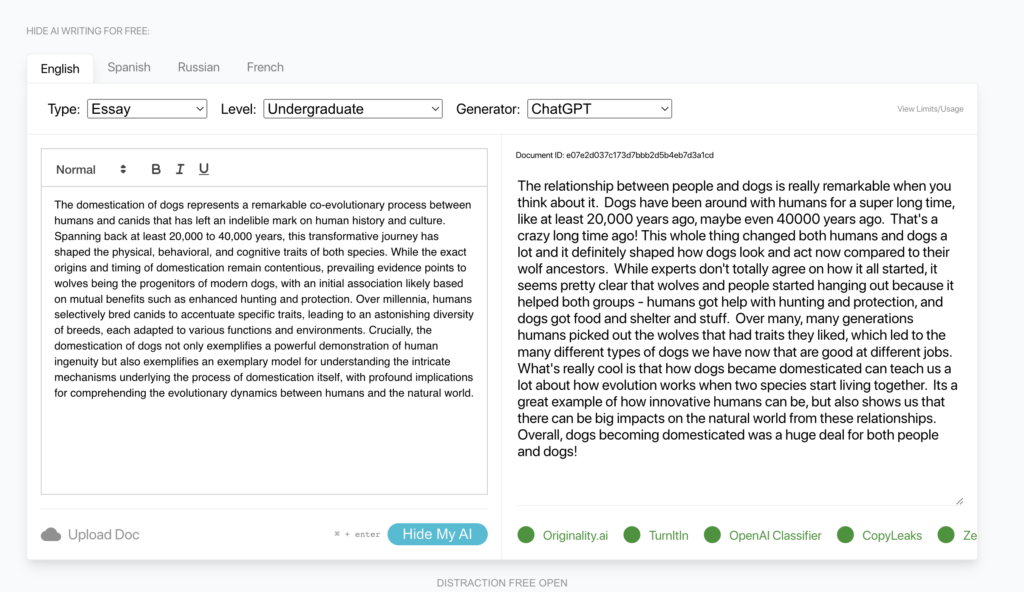

If you're looking to pass AI detectors at scale, leveraging the efficiencies of AI but still wanting a humanlike, undetectable tone, you can use a tool like HideMyAI, which will also bypass AI detectors. Here's an example with the Originality tool, with the same input used for the first test.

First, we take the output, directly generated by ChatGPT and place it in the HideMyAI AI Humanizer tool. We've selected "English" as the language, "Blog Post" as the type, "Professional - Regular" as the level, "Third Person" perspective, and "ChatGPT" as the generator.

The process takes about 15 seconds and outputs highly readable content that will bypass even the best (paid) detectors (seen below).

You can try it out for free at HideMyAI.

In the past, many detectors made false positives which got a lot of people who wrote legitimate content in deep trouble. Now, detectors are getting better and will typically find AI content, while skewing to the side of caution (ie if they're not 100% sure, they'll let you know).

Even if you try and paraphrase AI content or lightly edit it, detectors will still likely figure out that it was written by AI.

However, there are tools out there that will bypass AI detectors by using AI of their own, HideMyAI, demonstrated in the section above, being one of them.

In this article, we'll compare the HideMYAI tool with other services and methods that people use to make AI content more humanlike.

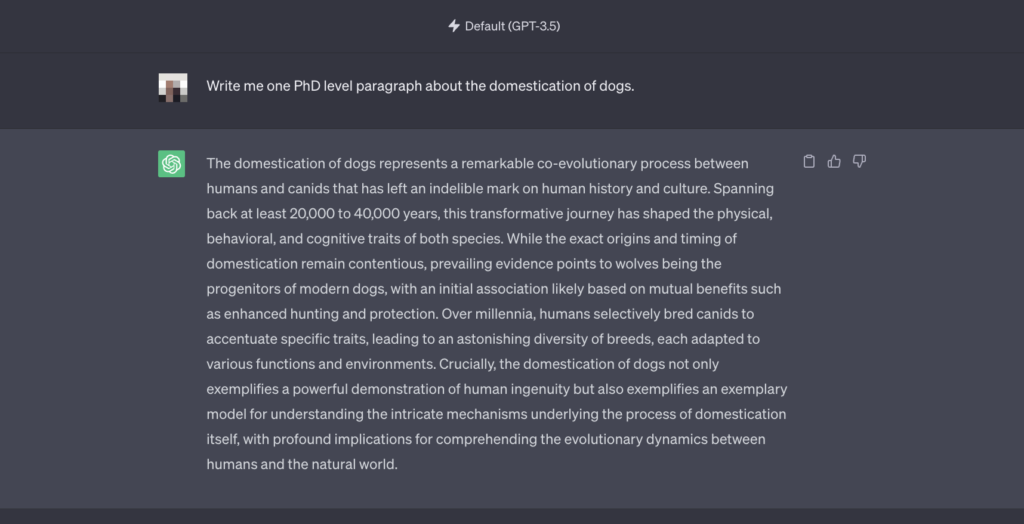

For this comparison, we'll use content generated on ChatGPT (free), using the prompt: "Write me one PhD level paragraph about the domestication of dogs".

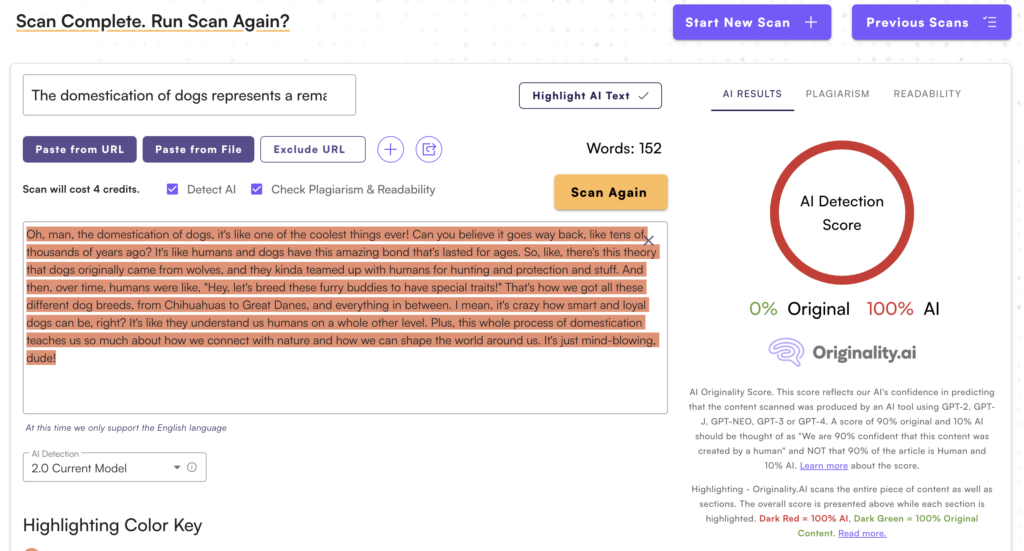

This is the output of roughly ~150 words. It's good content, but it's apparent that it's written by ChatGPT, even by an untrained eye. Once passed through a leading detector tool, it's even more apparent with a 100% AI score.

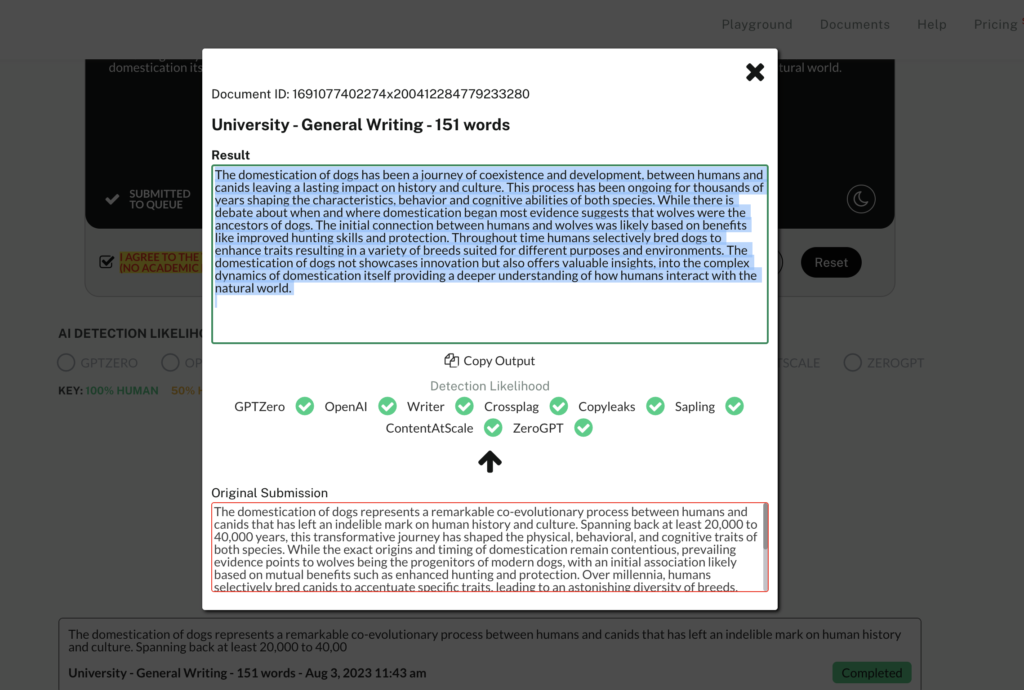

After passing it though the HideMyAI tool, the reworded content now scores a 98% originality score (with no human editing). The wordcount has also increased slightly.

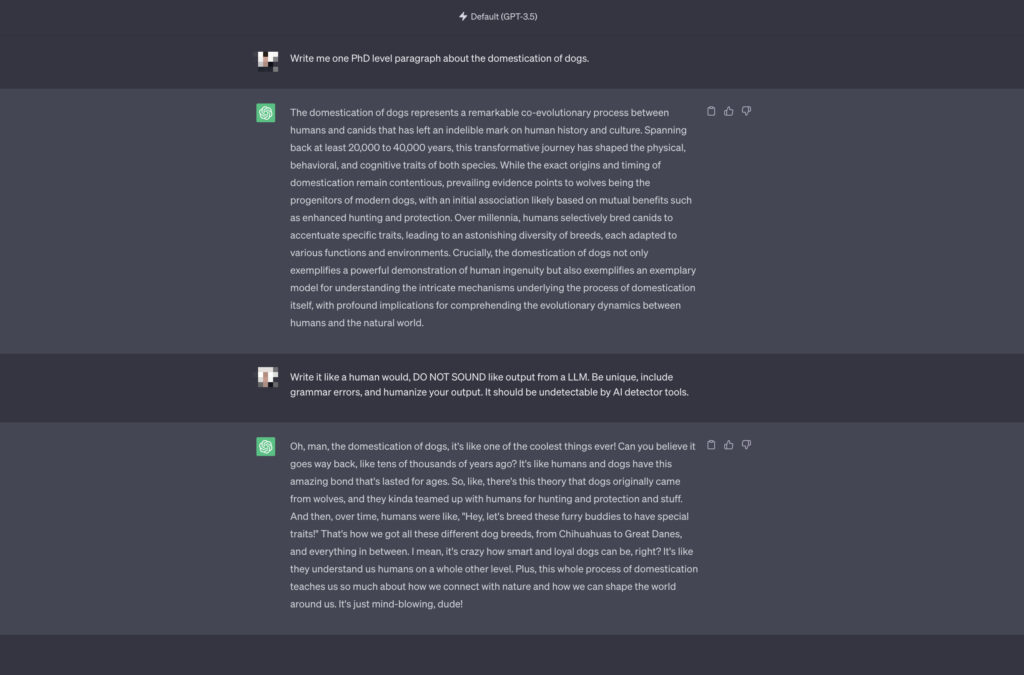

Many people try and tune the ChatGPT output to sound more human-like by using prompting techniques. For example, here's how I got the following output: "Write it like a human would, DO NOT SOUND like output from a LLM. Be unique, include grammar errors, and humanize your output. It should be undetectable by AI detector tools."

But now, it just sounds like an AI pretending to be a teenage girl. And the AI scanner sees right through it, scoring it as 100% AI generated:

Undetectable AI is a SaaS tool that people use to create human-like content. It's similar to HideMyAI, except a bit more limited.

HideMyAI supports many more parameters - you can select the type of writing, the writing level, and the generator you used to create the content.

For Pro users, there is also support for multiple languages including Spanish and French (this is in public beta at the time of writing this article) and bulk document uploading (perfect for SEOs), something that Undetectable doesn't support.

Compared the the Undetectable.AI, HideMyAI outputs are a lot more coherent, natural and undetectable, though this sometimes results in a lower wordcount than the input.

In this AI detection scan for the Undetectable AI, you can see that their output scores a 64% Original score, compared to the HideMYAI score of 98% Original. This is a common difference, regardless of our output settings (this example was Level: Secondary School, Type: Essay, Generator: ChatGPT).

The domestication of dogs has been a journey of coexistence and development, between humans and canids leaving a lasting impact on history and culture. This process has been ongoing for thousands of years shaping the characteristics, behavior and cognitive abilities of both species. While there is debate about when and where domestication began most evidence suggests that wolves were the ancestors of dogs. The initial connection between humans and wolves was likely based on benefits like improved hunting skills and protection. Throughout time humans selectively bred dogs to enhance traits resulting in a variety of breeds suited for different purposes and environments. The [etc...]

The relationship between people and dogs is really remarkable when you think about it. Dogs have been around with humans for a super long time, like at least 20,000 years ago, maybe even 40000 years ago. That's a crazy long time ago! This whole thing changed both humans and dogs a lot and it definitely shaped how dogs look and act now compared to their wolf ancestors. While experts don't totally agree on how it all started, it seems pretty clear that wolves and people started hanging out because it helped both groups - humans got help with hunting and protection, and dogs got food and shelter and stuff. Over many, many generations humans picked out the wolves that had traits they liked [etc...]

The HideMyAI team consists of AI researchers with a mission of making AI content sound more human, and we retrain our models/process at least once a week. That means our Humanized Content can be the best on the market, beating competitors and AI detectors alike.

Sign up today: https://hidemy.ai/register

As artificial intelligence (AI) writing tools like ChatGPT become more advanced, many educators are concerned about students using these tools to generate content for assignments. In response, the academic integrity company Turnitin has developed new AI writing detection capabilities. But how exactly does the system work, and what should students and teachers know about it?

In this blog post, we'll provide an overview of Turnitin's AI detector, including details on how it functions and what the results mean. The goal of this article is to promote understanding between students and teachers around this emerging technology, specific to academia.

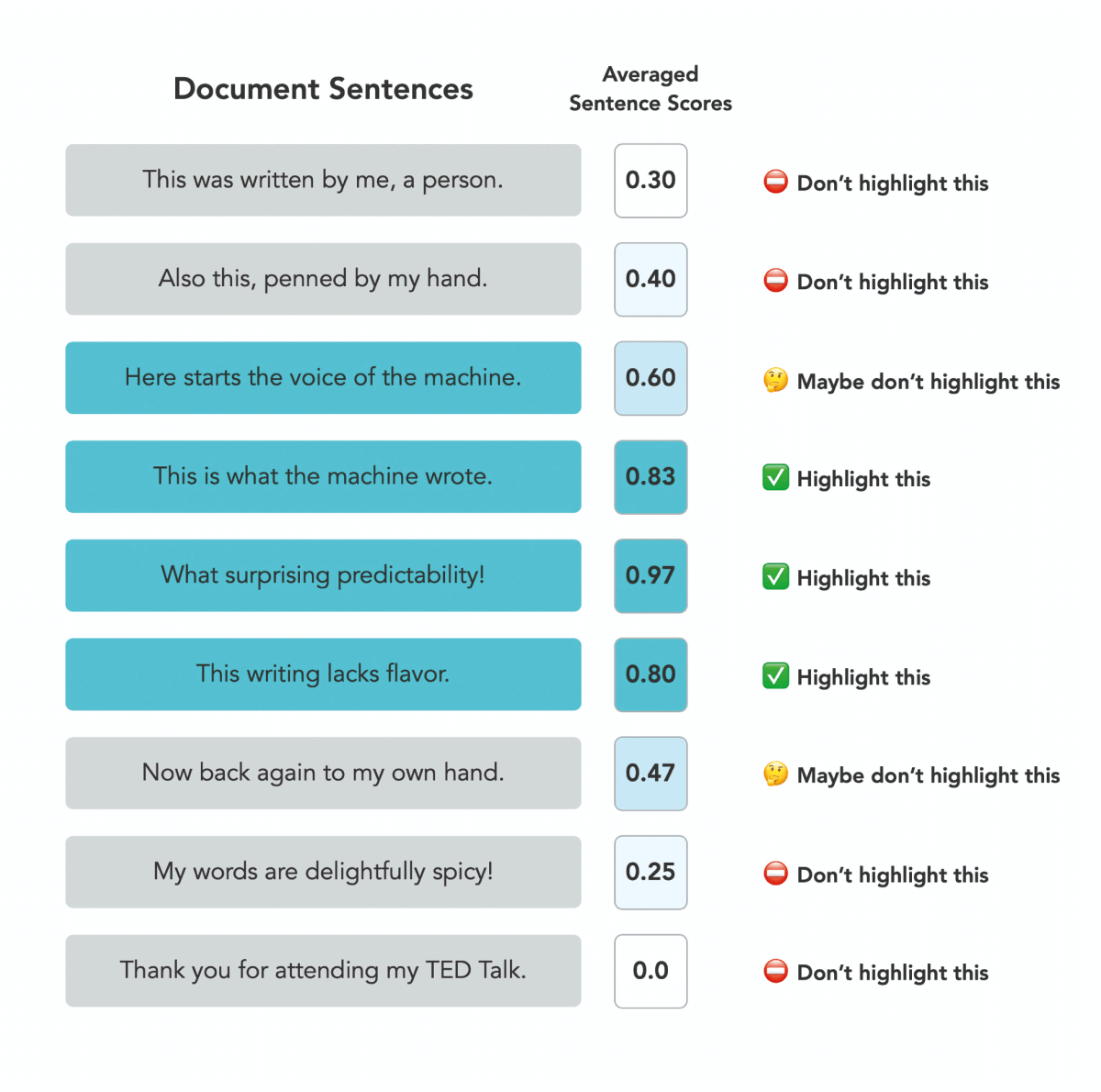

According to Turnitin's FAQ, the AI detector breaks submissions into text segments of "roughly a few hundred words" and analyzes each one using a machine learning model. Specifically, the FAQ states:

"The segments are run against our AI detection model and we give each sentence a score between 0 and 1 to determine whether it is written by a human or by AI. If our model determines that a sentence was not generated by AI, it will receive a score of 0. If it determines the entirety of the sentence was generated by AI it will receive a score of 1."

The model generates an overall AI percentage for the document based on the scores of all the segments. Currently, it is trained to detect content generated by models like GPT-3 and ChatGPT.

It's important to understand what the AI percentage actually means. As the FAQ explains:

"The percentage indicates the amount of qualifying text within the submission that Turnitin’s AI writing detection model determines was generated by AI. This qualifying text includes only prose sentences, meaning that we only analyze blocks of text that are written in standard grammatical sentences and do not include other types of writing such as lists, bullet points, or other non-sentence structures."

So the percentage reflects predicted AI writing in prose sentences, not necessarily the full submission. Short pieces under 300 words won't receive a percentage.

Turnitin aims to maximize detection accuracy while keeping false positives under 1%. But there's still a chance of incorrectly flagging human writing as AI-generated. The FAQ states:

"In order to maintain this low rate of 1% for false positives, there is a chance that we might miss 15% of AI written text in a document."

For documents with less than 20% AI detected, results are considered less reliable. And for shorter pieces, sections of mixed human/AI writing could get labeled fully AI-generated.

It's important that teachers don't treat the AI percentage as definitive proof of misconduct. As Turnitin states, the tool is meant to provide data to inform academic integrity decisions, not make definitive judgements.

Students who feel they have been falsely accused can contest the results with their teacher and request a manual review. Turnitin's tool is still a work in progress, so feedback can help improve accuracy over time.

Moving forward, students and teachers will need to have open and honest conversations about AI writing. Rather than an adversarial dynamic, the goal should be upholding academic integrity while also advancing learning. With care on both sides, this emerging technology can be handled in a fair, transparent way.

*this blog post was written by AI with fact checking and edits from the HideMyAI staff and tool. This is how all content should be created in the AI era.

AI watermarking refers to techniques that embed invisible identifying marks or labels into content generated by artificial intelligence systems.

The goal is to create a "machine-readable" indicator that content was produced by AI. This allows automated systems to identify and flag AI-created material.

There are a few potential technical approaches to watermarking AI content. For text, specialized Unicode characters or sequences not found in human writing could be used. For example, Google and others have proposed using a unique "AI" alphabet.

For images, certain pixels could be altered to form a hidden pattern or watermark imperceptible to humans. Researchers have experimented with embedding watermarks into sample images and changing their classification label as a "backdoor attack."

For audio, parts of the sound wave spectrum could be modified to encode a watermark. For video, watermarks could be embedded in both the visual and audio components.

The key idea is that the watermarks are implanted by the AI system during content creation. Later, algorithms can detect the presence of the watermarks to identify the content as AI-generated.

AI watermarking has a number of proposed advantages. It could help identify harmful AI-generated misinformation like deepfake videos or audio.

News organizations, social networks and governments are especially interested for this purpose. It may assist copyright enforcement and digital rights management by tracing AI-created content back to its source. Watermarking allows creators to opt out of their work being used without permission to train AI models.

Artists have raised concerns about this issue. It promotes transparency by distinguishing human-created vs. machine-created material. Consumers may want to know the provenance of content. And it provides a technical accountability solution for AI systems.

Records of watermarks could also audit when and how AI is deployed.

AI watermarking does raise some unanswered questions.

The robustness of watermarking techniques remains unproven. More research is still required to develop watermarking methods that are resistant to removal, spoofing, or other circumvention. Watermarks need to persist even after modification of the content.

In addition, watermarking needs to work seamlessly across diverse data types like text, images, video and audio. Each medium presents unique challenges and may require customized watermarking approaches. Universal techniques that work for all media without degradation of quality have yet to be perfected.

While AI watermarking aims to provide benefits like transparency and accountability, the technology poses some risks that merit careful consideration. Watermarking could enable increased surveillance and invasions of privacy if used to monitor individuals' media consumption and activities.

Authoritarian regimes could exploit watermarks to track dissidents or restrict free speech. There are concerns that watermarks could introduce security vulnerabilities or unintended distortions in AI models. If participation is mandatory, watermarking may impose unfair burdens on smaller AI developers with limited resources.

Reliance on watermarks could lead to a false sense of security if they are improperly implemented or fail to work as intended. Some critics also argue watermarking could unfairly stigmatize AI-generated content and constrain beneficial applications.

Using AI tools like ChatGPT to assist with content creation raises complex questions when it comes to watermarking and attribution, especially for student work. On one hand, using AI for drafting and editing could be seen as a valuable skill. Penalizing students for leveraging helpful technologies may discourage adoption.

However, passing off AI-generated content as fully original does raise ethical concerns around effort, merit and integrity. Watermarks provide a degree of attribution, but striking the right balance is challenging.

Schools should aim to cultivate disclosure, integrity and ethical AI utilization by students, rather than just focusing on detection and punishment. Mandatory watermarking risks scrutinizing student work in an overzealous manner based on limited signals.

More teacher-student discussion on appropriate AI use for assignments could help establish norms, in addition to watermarking.

Encouraging transparency and ethical reasoning is key – watermarking alone does not provide all the answers. Carefully considering the interplay between watermarks, academic integrity and productive AI usage by students is crucial.

While AI watermarking appears promising, there are reasonable concerns about its real-world viability, potential for misuse, and whether voluntary measures adequately protect individual rights.

As the technology evolves, we must thoroughly examine its societal impacts. More debate is required to shape watermarking into an ethical, secure and effective solution.